Apple's Unexpected Advantage in the AI Race

By adlrocha

AI Summary

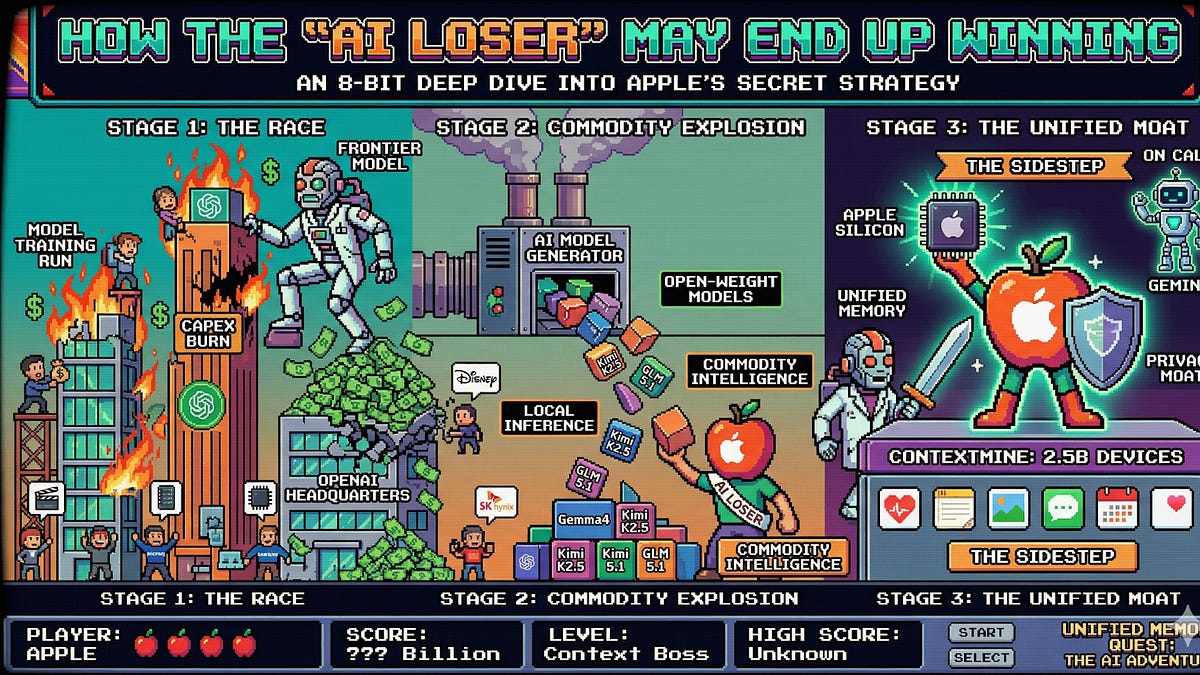

In recent years, the race to develop the most advanced AI models has intensified, with companies investing heavily in infrastructure and research. However, as AI intelligence becomes commoditized, the landscape is shifting. While companies like OpenAI burn through vast resources to maintain their competitive edge, Apple has taken a different path. Despite being perceived as lagging in AI, Apple has strategically positioned itself by leveraging its existing ecosystem of 2.5 billion devices, rich with user context and data.

Apple's approach focuses on utilizing its hardware and software co-design, particularly its unified memory architecture, which allows for efficient local model inference. This architecture, initially designed for efficiency and performance, has proven advantageous for running large language models (LLMs) locally, minimizing the need for extensive cloud infrastructure.

The commoditization of AI models means that less capable models are rapidly catching up to frontier models, reducing the competitive advantage of having the 'best' model. This shift has led companies like Anthropic to focus on capturing the usage layer, creating tools that integrate seamlessly into existing workflows, thereby locking users into their ecosystem.

Apple, on the other hand, capitalizes on its privacy-centric approach, keeping user data on-device and maintaining a strong context layer. This strategy not only aligns with their long-standing privacy stance but also positions them uniquely in the AI market, where user context becomes the new moat.

The Gemini deal, where Apple licenses Google's frontier model for complex queries, exemplifies their strategy of leveraging external AI capabilities while retaining control over the context and user experience. This approach allows Apple to avoid the massive infrastructure costs associated with developing and maintaining frontier AI models.

Apple's unified memory architecture, a result of their focus on hardware/software integration, has unexpectedly become a key asset in the AI domain. This architecture supports efficient local inference, enabling complex models to run on consumer devices without significant performance trade-offs.

The success of Apple's strategy hinges on its ability to integrate AI seamlessly into its ecosystem, much like how the App Store became a platform for developers. By providing the best environment for AI models to operate, Apple could become the go-to platform for AI applications, even if they don't lead in model development.

While the future remains uncertain, Apple's position, with its vast device network and focus on privacy and efficiency, could prove advantageous in an AI-driven world. Whether this outcome is the result of strategic foresight or fortunate circumstances, Apple's foundation seems well-suited for the evolving AI landscape.

Key Concepts

AI commoditization refers to the process by which AI technology becomes widely accessible and affordable, reducing the competitive advantage of having exclusive access to advanced AI models.

User context as a moat refers to the strategic advantage gained by companies that can leverage detailed user data and context to enhance AI capabilities and user experience.

Category

AIMore on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card