The Evolution of Intelligence: From Data to AGI

By 2026 ⁄ 04 ⁄ 11

AI Summary

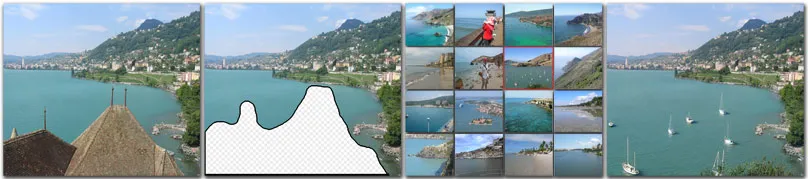

In a memorable lecture, Professor Alyosha Efros revisited the groundbreaking work of Hays & Efros (2007) on scene completion, demonstrating that a simple approach using a vast dataset can yield impressive results. This method involved matching a query image with a similar scene from a massive image corpus, highlighting the power of data over complex algorithms. This principle aligns with the insights of Halevy, Norvig & Pereira (2009) and Richard Sutton's 'The Bitter Lesson' (2019), emphasizing that simple models with extensive data often outperform complex ones.

The Scaling Hypothesis, illustrated by the evolution of GPT models, suggests that intelligence emerges from scaling simple neural networks with vast data and computational power. GPT-1's initial limitations were overcome by GPT-2's expanded parameters and data, leading to significant improvements in text generation. This trend continued, with larger models achieving remarkable capabilities, although they still falter on tasks requiring genuine understanding and reasoning.

The distinction between correlation and causation becomes crucial when evaluating AI's intelligence. While LLMs can produce convincing outputs, they often lack true causal reasoning, leading to 'spiky intelligence'—impressive in some areas but flawed in others. This issue is evident in tasks like determining the best way to reach a car wash, where models fail to apply common sense reasoning.

The ARC Prize's benchmarks challenge LLMs by placing them in novel environments without prior knowledge, highlighting their reliance on existing data. Unlike humans, who can adapt and learn from new experiences, LLMs struggle without pre-existing patterns to draw from. This underscores the need for a new approach to achieve artificial general intelligence (AGI).

Raymond Cattell's distinction between crystallized and fluid intelligence provides a framework for understanding these challenges. While LLMs excel in crystallized intelligence, they lack the fluid intelligence necessary for novel problem-solving. Human infants demonstrate an innate ability to reason causally, suggesting that fluid intelligence is foundational to learning.

The path to AGI may require a shift from scaling data and compute to developing architectures that learn dynamically through interaction. Current advancements in LLMs, such as RLHF and retrieval-augmented generation, improve performance but remain limited by their reliance on pre-existing data. To achieve true AGI, we must explore new methods that enable models to form and revise hypotheses in real-time, much like humans do when learning from their environment.

Key Concepts

The idea that intelligence can emerge from scaling simple neural networks with sufficient data and computational power, allowing for capabilities like reasoning and generalization to develop.

A principle in AI that suggests the most significant advancements come from leveraging computation and data rather than injecting human knowledge or intuition into models.

Category

AIOriginal source

https://pleasedontcite.me/learning-backwards/More on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card