Enhancing Coding Agents with Literature-Driven Research for Optimizations

By Alex Kim

AI Summary

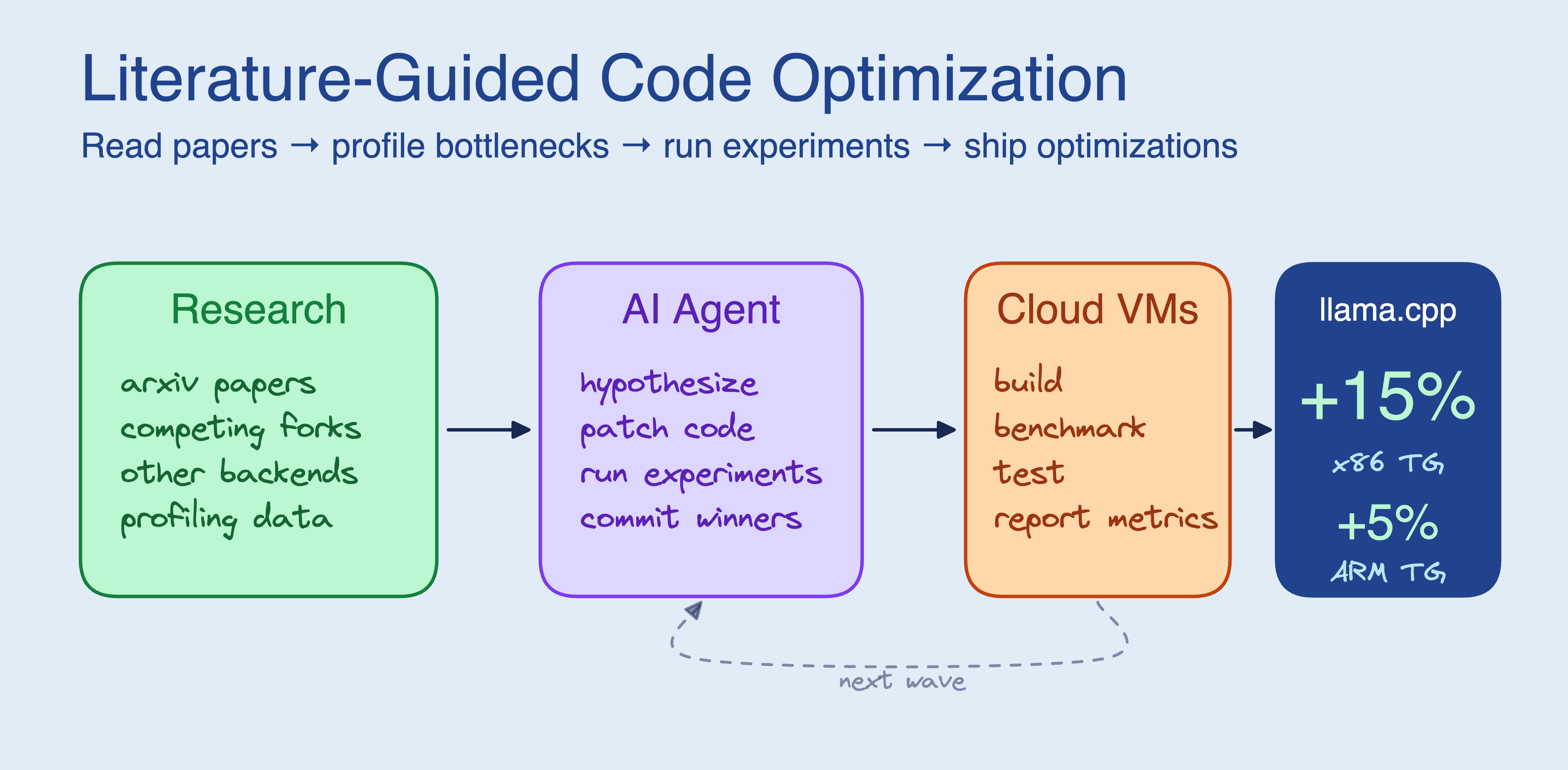

In the quest for better software optimizations, coding agents can achieve remarkable results by incorporating a literature search phase before diving into code. By studying relevant papers and examining competing projects, agents can uncover optimizations that might otherwise be missed. This approach was tested on llama.cpp using four cloud VMs, resulting in five optimizations that increased flash attention text generation speed by 15% on x86 and 5% on ARM architectures.

## The Power of Research-Driven Agents

Agents that delve into academic papers and analyze competing projects before coding can identify optimizations that code-only agents overlook. For instance, the literature research revealed operator fusions present in CUDA/Metal backends but absent from CPU implementations. Out of over 30 experiments, five were successful, including kernel fusions and adaptive parallelization, with the most significant improvement being a fusion of three passes over flash attention’s QK tile into a single AVX2 FMA loop.

## Case Studies and Results

Previous experiments with code-only context, like Karpathy’s autoresearch, showed that agents could autonomously improve neural network training scripts. However, when the optimization surface is not visible in the source code, such as with llama.cpp’s CPU inference path, agents need external knowledge to generate meaningful hypotheses. By adding a research phase, agents can better understand the problem space and make informed decisions.

## Implementing the Research Phase

The enhanced autoresearch loop includes a research step where agents read papers, study forks, and explore other projects. This preparation mirrors the approach a senior engineer would take before tackling unfamiliar code. The agent writes its own benchmark script and correctness checks, then uses SkyPilot to distribute experiments across cloud VMs. Each experiment is run on its own VM, with results checked and winners committed.

## Successful Optimizations

The research phase led to several successful optimizations, such as softmax and RMS norm fusions, adaptive parallelization, and graph-level RMS_NORM + MUL fusion. These changes reduced memory passes and improved memory access patterns, leading to performance gains in prompt processing and text generation.

## Challenges and Learnings

Not all experiments were successful; many failed due to the compiler and hardware already optimizing certain aspects. Additionally, shared-tenancy EC2 instances introduced variance, highlighting the importance of using standard deviation as a quality signal.

## Conclusion

Incorporating a research phase into the coding agent’s workflow allows for more informed and effective optimizations, particularly when the codebase lacks sufficient information. This approach can be applied to any project with a benchmark and test suite, offering a promising path for future software enhancements.

Key Concepts

A process where coding agents or developers study academic literature and existing projects to inform and guide the optimization of software code.

Automated systems or software that can autonomously modify and optimize code based on predefined goals or metrics.

Category

ProgrammingOriginal source

https://blog.skypilot.co/research-driven-agents/More on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card