Introspective Diffusion Language Models: Revolutionizing Token Generation

AI Summary

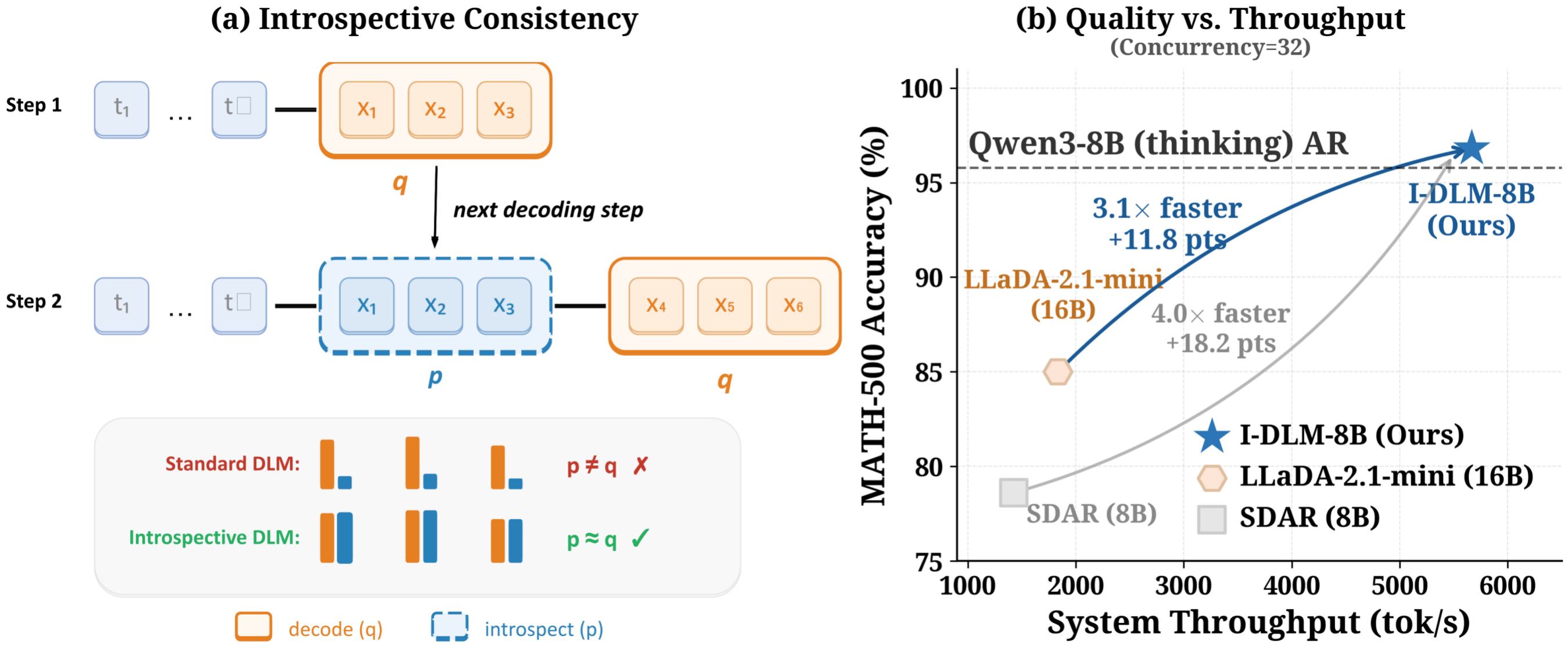

Diffusion language models (DLMs) hold the potential to revolutionize token generation by overcoming the sequential bottleneck of autoregressive (AR) decoding. However, they have historically lagged behind AR models in quality due to a lack of introspective consistency. The Introspective Diffusion Language Model (I-DLM) addresses this by integrating introspective strided decoding (ISD), which verifies previously generated tokens while advancing new ones in a single forward pass. This innovation allows I-DLM-8B to match the quality of its AR counterparts, outperforming models like LLaDA-2.1-mini with fewer parameters and significantly higher throughput.

I-DLM's approach to introspective consistency training involves converting pretrained AR models using causal attention and a unique all-masked objective. This method ensures that the generation and verification of tokens occur simultaneously, enhancing efficiency and quality. The I-DLM method is a drop-in replacement within existing AR infrastructure, requiring no custom setup, which facilitates its adoption.

Empirical results demonstrate that I-DLM is the first DLM to achieve the quality of same-scale AR models across 15 benchmarks, excelling in areas such as knowledge, reasoning, math, and code. Its throughput is 2.9-4.1 times higher than that of LLaDA-2.1-mini, making it exceptionally efficient in high-concurrency environments.

The I-DLM system also introduces a novel speedup factor explorer, which shows how the acceptance rate and stride size impact decoding speed. This exploration reveals that I-DLM maintains efficiency in memory-bound regimes, unlike other models that become compute-bound early.

Installation and deployment of I-DLM are straightforward, with detailed documentation provided for training, inference, and serving. The use of gated LoRA adapters ensures lossless acceleration, maintaining output identical to base AR models. This makes I-DLM a powerful tool for developers seeking to optimize language model performance without sacrificing quality.

Key Concepts

Introspective consistency refers to a model's ability to verify and agree with the tokens it generates. It ensures that the model's output is coherent and aligns with its internal logic.

Introspective Strided Decoding (ISD) is a method that combines token generation and verification in a single forward pass. It enhances model efficiency by ensuring that each token generated is introspectively consistent with the model's internal logic.

Category

AIOriginal source

https://introspective-diffusion.github.io/More on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card