It's OK to Compare Floating-Points for Equality

AI Summary

Floating-point numbers often get a bad rap for their inexactness, leading many to adopt epsilon-comparisons as a safety net. However, after years of coding in fields like geometry and simulations, I've found that using epsilons is rarely the best solution. Floating-point numbers are not mysterious black boxes; they are deterministic and standardized, though they can't represent every real number due to finite memory constraints.

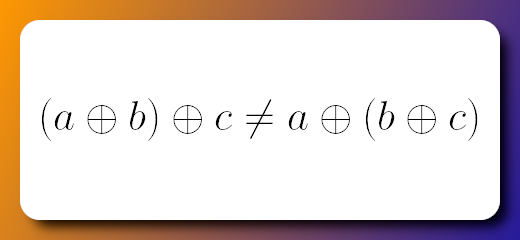

The common belief that floating-point operations are inherently uncertain is misleading. Arithmetic operations on floating-points are designed to produce the closest possible result to the true value, despite their approximate nature. However, this doesn't mean that mathematical properties like associativity always hold. For instance, adding numbers in different orders can yield slightly different results due to rounding.

Epsilon-comparisons, while popular, are problematic. They are often hacky, lead to debugging nightmares, and don't always solve the underlying issue. For example, using different epsilons across a program can disrupt input-output invariants, causing unpredictable behavior. Moreover, these comparisons aren't transitive, which can break algorithms that assume transitivity.

In practical scenarios like grid-based movement in games, relying on epsilons can lead to unnecessary complexity and inefficiencies. Instead, using a well-tested acceptance radius or allowing user commands without waiting for animations can be more effective. Similarly, in spherical linear interpolation, using a random epsilon can reduce precision. Instead, leveraging constants like FLT_EPSILON or directly checking conditions can yield better results.

When computing vector lengths, precision issues arise with very small vectors. A more robust approach involves normalizing the vector to avoid precision loss. In solving linear systems, arbitrary epsilons can mislead results. It's better to let users set thresholds or use more reliable methods like condition numbers.

For ray-box intersections, the code can handle edge cases like zero direction without epsilons, thanks to IEEE754 standards. In convex hull computations, floating-point errors can disrupt algorithms. Solutions include rounding inputs or using precise arithmetic methods.

However, there are cases where epsilons are useful, such as sanitizing user input in visualization libraries or writing test cases for math libraries. In these scenarios, carefully chosen epsilons can prevent rendering artifacts or ensure test robustness.

Ultimately, while epsilons can be useful, they are not a one-size-fits-all solution. Thoughtful consideration of the problem and context is crucial, rather than blindly following conventions.

Key Concepts

Floating-point numbers are a way to represent real numbers in computing, using a fixed amount of memory. They are approximate and cannot represent every real number exactly due to finite precision.

Epsilon-comparisons involve checking if two floating-point numbers are approximately equal within a small margin of error, known as epsilon. This is often used to account for precision errors in floating-point arithmetic.

Category

ProgrammingOriginal source

https://lisyarus.github.io/blog/posts/its-ok-to-compare-floating-points-for-equality.htmlMore on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card