Why Model Context Protocol (MCP) Outshines Skills for AI Integration

AI Summary

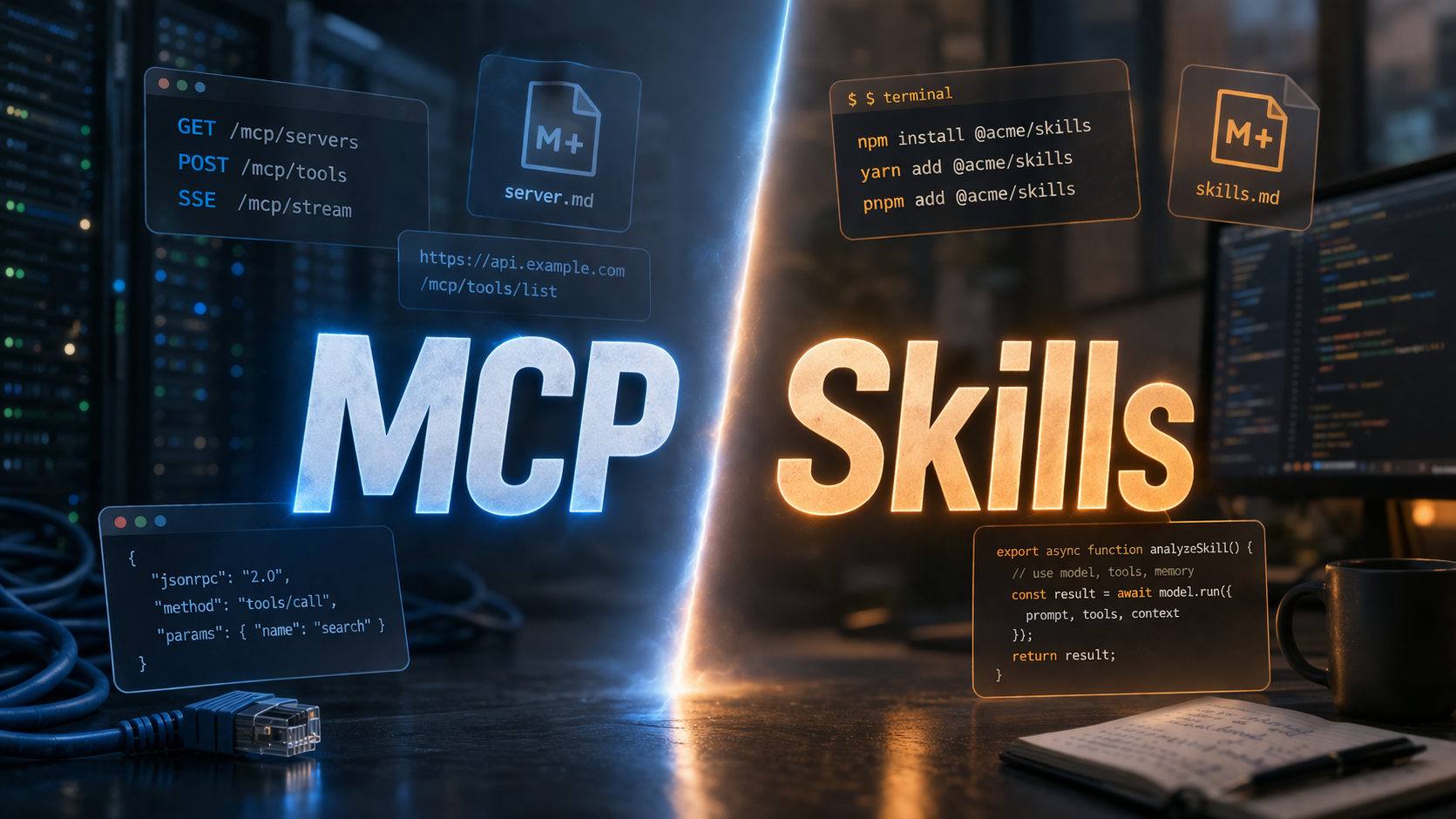

In the rapidly evolving AI landscape, there's a growing push towards adopting 'Skills' as the standard for equipping Large Language Models (LLMs) with capabilities. However, I remain a staunch advocate for the Model Context Protocol (MCP) as a more pragmatic and effective architectural choice for integrating AI with services. While Skills are beneficial for imparting pure knowledge or teaching an LLM to use an existing tool, MCP excels in providing LLMs with actual access to services without the need for cumbersome command-line interfaces (CLIs).

The core philosophy of MCP is its API abstraction, which simplifies interactions by allowing the LLM to focus on the 'what' rather than the 'how'. This separation of concerns offers several advantages: zero-install remote usage, seamless updates, graceful authentication, true portability, sandboxing, smart discovery, and frictionless auto-updates. These features make MCP a superior choice for service integration, as it eliminates the need for local installations and complex setups.

In contrast, the reliance on Skills often introduces a host of challenges. Many Skills require dedicated CLIs, which can be problematic in environments that don't support them, such as standard web versions of AI tools like ChatGPT or Perplexity. This leads to deployment issues, secret management nightmares, fragmented ecosystems, and context bloat, where unnecessary information clutters the LLM's context window.

I argue that MCP should be the standard for connecting LLMs to services, providing a clean, strongly-typed interface that is more efficient than a Skill that requires CLI installation and management. For example, Google Calendar should use an OAuth-backed remote MCP rather than a gcal CLI, and browsers like Chrome should expose MCP endpoints for control.

Skills, on the other hand, should focus on pure knowledge and context, such as teaching LLMs how to use existing tools or standardizing workflows. They are ideal for explaining business jargon, communication styles, or handling specific tasks like PDF manipulation.

The terminology might be part of the problem. Skills could be seen as LLM manuals, while MCPs are connectors. Both have their place, but for seamless AI integration, standardized interfaces like MCP are crucial. I maintain a repository of Skills for common procedures and hope the industry doesn't abandon MCP in favor of fragmented CLI solutions.

To address the issue of local-only MCP servers, I developed MCP Nest, which tunnels these servers through the cloud, making them accessible from any device. This ensures that local MCPs can be used universally without exposing machines directly, supporting a more integrated and efficient AI ecosystem.

Key Concepts

MCP is an architectural framework that provides an API abstraction layer for LLMs, allowing them to interact with services without needing to understand the underlying processes. It simplifies service access by focusing on what actions need to be performed rather than how they are executed.

Skills are modules or scripts that provide LLMs with specific knowledge or the ability to perform tasks, often requiring command-line interfaces (CLIs) for execution. They are typically used to teach LLMs how to use tools or understand specific contexts.

Category

AIOriginal source

https://david.coffee/i-still-prefer-mcp-over-skills/More on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card