Zero-Copy GPU Inference with WebAssembly on Apple Silicon

By Agam Brahma

AI Summary

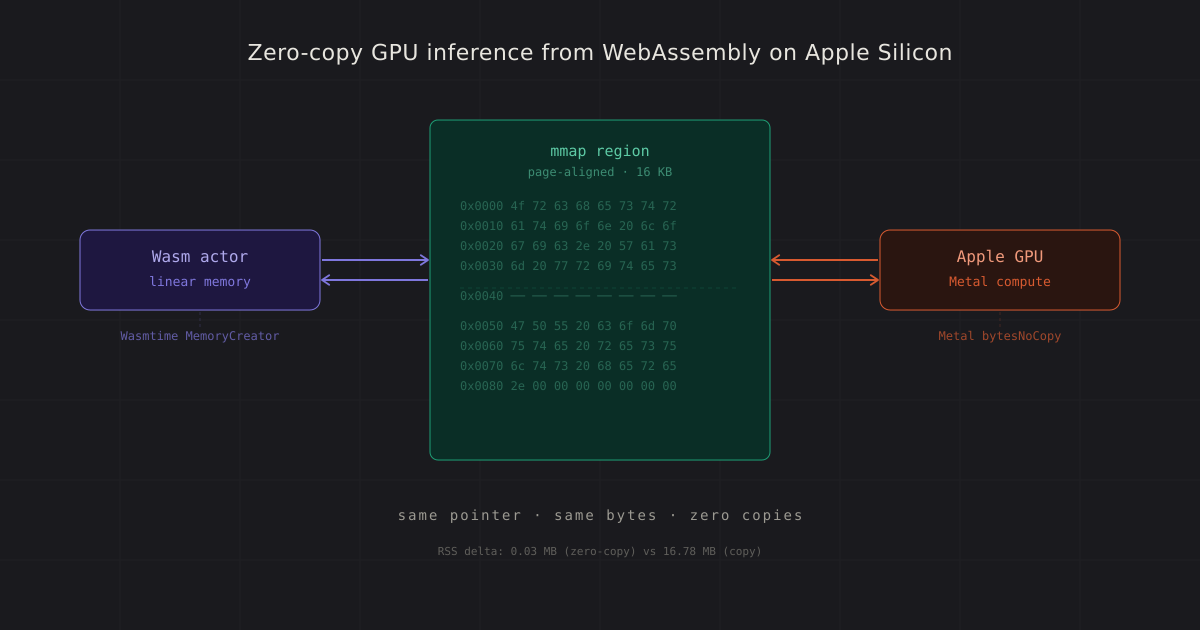

On Apple Silicon, the Unified Memory Architecture allows a WebAssembly module's linear memory to be shared directly with the GPU, eliminating the need for data copying, serialization, or intermediate buffers. This seamless integration enables the CPU and GPU to read and write the same physical bytes, significantly reducing overhead. Traditionally, transferring data from a VM sandbox to a GPU involves costly serialization and copying across a bus, but Apple Silicon's architecture removes this barrier, allowing WebAssembly to act as the control plane and the GPU as the compute plane.

I am developing Driftwood, a project that leverages this zero-copy capability for stateful AI inference. The process involves a three-link chain: using mmap for page-aligned memory, Metal for accepting pointers without copying, and Wasmtime for custom memory allocation. This setup allows a Wasm module to fill a matrix in its linear memory, which the GPU computes on, and the results are directly accessible by the Wasm module without any data duplication.

Testing this setup with a 128x128 matrix multiplication showed zero errors and minimal memory overhead, proving the effectiveness of the zero-copy path. This approach is particularly beneficial for large-scale applications, such as transformer inference, where memory efficiency is crucial. By sharing memory between Wasm and the GPU, I can run complex models like Llama 3.2 1B Instruct on an Apple Silicon GPU, achieving impressive latencies.

Furthermore, the ability to serialize and restore the key-value cache used in transformer models enhances portability and efficiency. This allows for the preservation of context across different machines or models, enabling stateful actor mobility. The KV cache can be serialized to a standard format and restored later, providing significant speedups over recomputation.

Driftwood aims to build on this foundation by supporting actor snapshots, checkpoint portability, and multi-model compatibility. While still in the early stages, the zero-copy chain has proven viable, and future work will focus on testing larger models and ensuring robustness at scale.

Key Concepts

A system architecture where the CPU and GPU share the same physical memory, allowing for direct data access without the need for data transfer across a bus.

A method of data handling where data is accessed directly from its original location without creating additional copies, thus reducing overhead and latency.

Category

TechnologyOriginal source

https://abacusnoir.com/2026/04/18/zero-copy-gpu-inference-from-webassembly-on-apple-silicon/More on Discover

Summarized by Mente

Save any article, video, or tweet. AI summarizes it, finds connections, and creates your to-do list.

Start free, no credit card